Website authority is the deciding signal for whether AI cites your brand in 2026. Not your homepage copy. Not your keyword density. Not the raw volume of pages you publish. The compounded judgement that ChatGPT, Perplexity, Gemini, Claude, and Google AI Overview have built about whether your site is trustworthy enough to recommend that is what determines whether an AI agent answers a customer's question with your name in it, or with a competitor's.

This guide covers the three layers that compound into authority domain authority, page authority, and trust signals the backlink engine that powers all three, and the toxic links that quietly undermine every layer. Throughout, the lens is AI visibility, because in 2026 the same authority that wins Google rankings is the authority that wins AI citations.

Key Takeaways

- Authority is now the primary signal that determines whether AI platforms cite your brand domain-level trust repeatedly ranks as the strongest predictor of AI citation probability in industry analyses.

- Authority is built in three compounding layers: domain authority, page-level authority, and trust signals (E-E-A-T). Backlinks are the connective tissue that flows through all three.

- Page authority is the layer where rankings are actually won Google ranks individual URLs and AI cites individual pages, not domains.

- Trust is the foundation everything else rests on. Untrustworthy pages have low E-E-A-T no matter how authoritative they appear, and AI platforms are even more conservative about trust than search engines because they amplify whatever they cite.

- Original research, editorial backlinks, structured data, and entity consistency are the highest-leverage moves. Buying links, link exchange schemes, and toxic backlink patterns degrade every layer at once.

Why Authority Matters More in the AI Era

The shift in how people find information has changed which signals carry weight. Gartner forecasts that traditional search volume will drop 25% by 2026 as users move to AI assistants. Search is not going away, but the share of decisions made inside an AI conversation is growing fast. The brands cited in those conversations are the brands that get the work.

AI platforms behave differently from search engines. When Google ranks a page, the user can click back and try another result. When an AI assistant gives one answer, it commits to a source. That asymmetry makes AI more conservative about trust than traditional search has ever been AI platforms ask "is this safe to cite?" before they ask "is this relevant?", and a relevant source without trust signals loses to a less relevant source the model trusts.

The signal-to-noise problem has compounded the effect. AI tools now publish millions of pages a day, and the gap between genuine expertise and machine-generated filler has collapsed in the open web. Search engines and AI models both need stronger trust signals to separate them, and the brands that invested in real authority before the flood are the ones still being cited through it.

Industry analyses of ChatGPT and Perplexity citations consistently land on the same conclusion: domain-level authority built through backlinks, editorial mentions, structured data, and consistent entity signals is the single strongest predictor of AI citation probability and the tactical playbook for engineering those citations builds directly on the same authority foundation. Authority is no longer a search engine ranking factor with AI as a side effect. It is now the master signal that decides visibility in both channels.

The Authority Stack: Three Layers That Compound

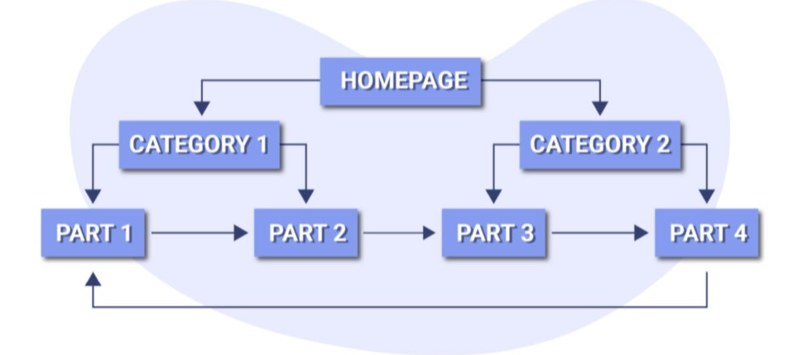

Authority is not one thing. It is three layers stacked on top of each other, each reinforcing the others, with backlinks running through all of them as the connective tissue.

The first layer is domain authority the aggregate trust your entire website has earned over time. It sets the ceiling for what your individual pages can achieve and gives AI platforms a coarse signal about whether your site is the kind of source they should treat seriously.

The second layer is page authority the ranking strength of individual URLs. Domains do not rank; pages do. AI does not cite homepages; it cites specific pages. This is the layer where positions are actually won and citations actually happen, and the layer you can move fastest because the levers are within your direct control.

The third layer is trust signals (E-E-A-T) the credibility wrapper around everything you publish. Trust is the foundation that page and domain authority both rest on. Untrustworthy pages have low E-E-A-T no matter how many backlinks they accumulate, and a single broken trust signal anonymous content, missing contact information, unverifiable claims can suppress an otherwise strong page.

Backlinks flow through every layer. They build domain authority by accumulating across the site over time, page authority by pointing directly at specific URLs, and trust because every editorial backlink from a credible publication is a third-party endorsement. The next sections work through each layer in detail, then circle back to the backlink engine that powers them all.

Layer 1: Domain Authority

Domain authority is the long game. It is built across months and years of consistent publishing, link earning, and brand building the layer that grows slowest but that compounds the hardest, and the layer that AI platforms reach for as the tiebreaker when two pages are otherwise comparable. Five strategies move domain authority in 2026, and they all compound on each other.

1. Build Topical Authority With Depth, Not Volume

Publishing fifty shallow articles on loosely related topics does not build authority. AI models and search engines both reward websites that cover a subject deeply and consistently. When your site demonstrates comprehensive knowledge of a specific domain, it becomes the source that algorithms trust.

Topical authority means interconnected content clusters where each piece adds a distinct angle on the same core topic. A site about AI search visibility builds authority by covering how AI engines choose which brands to recommend, how citation testing works, how to optimise content for different AI platforms, and how to measure the results. Audit your existing content for gaps. Identify the questions your audience asks that you have not answered. Then build those pieces with enough depth that a reader or an AI model would not need to go elsewhere for the answer.

2. Earn High-Quality Backlinks That Signal Trust

Backlinks remain one of the strongest authority signals in 2026, but quality matters far more than quantity. A single link from a respected industry publication carries more weight than dozens from low-quality directories, and AI models inherit those same trust signals when deciding what to cite. The tactical playbook for earning links sits later in this guide at this layer, what matters is recognising that link quality is the lever that moves domain authority faster than almost anything else.

3. Publish Original Data and Proprietary Research

AI models do not invent data. When they need statistics, benchmarks, or industry-specific numbers, they pull from verifiable sources. Proprietary data surveys, internal metrics, case studies with specific outcomes gives AI platforms something they cannot find anywhere else.

Content based on original data earns citations at significantly higher rates than content that summarises other sources. Research from Otterly.ai's AI Citation Economy report, which analysed over one million AI citations across ChatGPT, Perplexity, and Google AI Overviews, found that AI platforms consistently favour sources with quotable, reference-grade content over generic overviews. You do not need a research department: start with internal metrics, customer survey results, or industry observations from your own work. A single data point that no one else has published can drive more citations than an entire article of recycled information.

4. Implement Structured Data and Schema Markup

Schema markup is the bridge between your content and how machines understand it. When you implement structured data correctly, you are telling search engines and AI systems exactly what your content is about, who created it, and how it relates to other entities. Sites with comprehensive schema markup see measurably higher citation rates in AI-generated answers. The most impactful types are Organization, Article, FAQ, and HowTo. Beyond schema, your overall SEO foundation matters clean URLs, proper heading hierarchies, fast page load times, and mobile optimisation all contribute to how search engines and AI platforms evaluate trustworthiness.

5. Strengthen E-E-A-T Signals

Experience, Expertise, Authoritativeness, and Trustworthiness Google's E-E-A-T framework is the de facto standard for how AI models evaluate source credibility, and it sits at the heart of the SEO ranking signals that still apply in 2026. Experience means showing that your content comes from people who have actually done the work. Expertise is demonstrated through depth of knowledge: author bios that explain qualifications, consistent publication in a specific domain, content that goes beyond surface-level advice. Authoritativeness comes from external validation when other trusted sources reference your work. Trustworthiness is built through transparency, accuracy, and consistent messaging across your website and external profiles.

E-E-A-T is the bridge between domain authority and the trust layer covered later in this guide. The businesses that perform strongest in AI search visibility are the ones where E-E-A-T signals are genuine rather than manufactured.

Layer 2: Page Authority

Page authority is where rankings are actually won. Domain authority sets the ceiling, but it is individual page authority that determines which of your pages reaches it. Google ranks individual URLs. AI search engines cite individual pages. When ChatGPT answers a question about your industry, it pulls from specific pages, not your homepage. When Perplexity generates a response, it links to the exact URL it found most authoritative on that topic. Building domain authority is a long game measured in months and years; building page authority is something you can influence today, on every page you publish or update.

Page authority is a predictive score originally developed by Moz that estimates how likely a specific page is to rank in search engine results. The score ranges from 1 to 100, calculated using factors like the number and quality of backlinks pointing to that page, the linking domains' authority, and the page's overall link equity. Google does not use Moz's page authority score directly, but the underlying signals that PA measures backlink quality, link diversity, content relevance are exactly the signals Google's algorithm evaluates when deciding which URL deserves position one. The same signals now influence whether AI search engines pull a quote from your page.

Page Authority vs Domain Authority

| Factor | Page Authority | Domain Authority |

|---|---|---|

| Scope | Single URL | Entire domain |

| Influenced by | Direct backlinks, internal links, content quality | Total backlink profile, domain age, brand signals |

| Speed of improvement | Weeks to months | Months to years |

| Ranking impact | Direct Google ranks URLs | Indirect provides a baseline trust signal |

| AI citation impact | High AI cites specific pages | Moderate domain trust is a tiebreaker |

The practical implication: a page on a DA 30 website can outrank a page on a DA 70 website if the individual page has stronger authority signals. This happens constantly in competitive SERPs, and it happens even more frequently in AI search results where content quality and specificity carry extra weight.

Seven tactics build page authority that holds in both channels.

1. Earn Direct Backlinks to Individual Pages

Backlinks pointing directly to a page not just to your homepage are the single most powerful lever for page authority. A study by Backlinko of 11.8 million Google search results found that the number one result has an average of 3.8x more backlinks than positions two through ten. The mistake most sites make is concentrating all link-building effort on the homepage or a few pillar pages. Every page you want to rank needs its own backlink strategy: linkable assets on each important page, digital PR pitches that link to the most relevant URL, and resource page outreach for the specific page you want listed.

2. Build Strategic Internal Links

Internal links are the fastest way to influence page authority because they are entirely within your control. When another website links to one of your pages, that page gains authority. Internal links let you distribute that authority to other pages on your site.

Link from your highest-authority pages to your priority pages. Use descriptive anchor text generic anchors like "click here" waste the opportunity to signal relevance. Create hub-and-spoke structures where a topic cluster pillar links to supporting articles and each supporting article links back, concentrating topical authority across the entire cluster. Audit for orphan pages pages with no internal links pointing to them are invisible to both search engines and your own authority flow. Every important page should receive at least 3-5 contextual internal links from related content, and the broader URL and internal-link architecture shapes how that authority flows through your site.

3. Create Comprehensive, Query-Satisfying Content

Content quality influences page authority through two mechanisms: it attracts natural backlinks, and it earns engagement signals that reinforce rankings. Pages that rank well and stay ranked fully answer the query, provide unique value through original data or novel frameworks, and are structured for extraction with clear headings, concise definitions, structured lists, and FAQ sections. Word count alone does not matter. A 1,500-word page that covers a topic thoroughly will outperform a 5,000-word page padded with filler.

4. Optimise On-Page SEO Signals

Title tags and H1s should contain your primary keyword grounded in intent-driven keyword research for AI search and compel clicks a page that ranks but does not get clicked loses authority over time as CTR signals decline. URLs should be clean, descriptive, and short. Schema markup helps both search engines and AI platforms understand context and improves citation likelihood. Meta descriptions are not a direct ranking factor, but compelling ones improve CTR, which is a behavioural signal that reinforces rankings.

5. Improve Technical Performance

A slow-loading page loses authority regardless of how strong the domain is. Core Web Vitals Largest Contentful Paint (LCP), Interaction to Next Paint (INP), and Cumulative Layout Shift (CLS) are measured per page, not per domain. Keep LCP under 2.5 seconds by compressing images, using modern formats (WebP/AVIF), and minimising render-blocking resources. Keep INP under 200ms. Keep CLS under 0.1 by setting explicit dimensions for images and embeds. Google indexes the mobile version of your page, so a degraded mobile experience drags page authority down.

6. Build Topical Authority Around the Page

A page does not exist in isolation. Its authority is partly determined by the topical ecosystem around it. If your target page is about page authority, supporting articles about backlink building, internal linking, content optimisation, and technical SEO create a topical cluster that reinforces the main page's authority. Every supporting article should link to the main page, and the main page should link out to the supporting content. Brands that build topical authority are significantly more likely to be cited by AI search engines because AI platforms prefer sources that demonstrate comprehensive subject-matter expertise.

7. Monitor, Measure, and Iterate

Page authority is not static. Track referring domains per page, keyword rankings per page, AI citation frequency across the major answer engines, and content freshness pages that have not been updated in 12+ months may experience content decay as search engines prefer fresher sources. Run quarterly audits on your top 20 revenue-driving pages: update the content, add new internal links, and run targeted outreach for any that are losing ground.

Layer 3: Trust Signals (E-E-A-T)

Trust is the foundation that every other authority signal rests on. Google's own quality guidelines state it plainly: "trust is most important", and the other E-E-A-T components contribute to trust rather than standing apart from it. Untrustworthy pages have low E-E-A-T no matter how experienced, expert, or authoritative they appear. The stakes are higher in AI search: a search engine ranking an untrustworthy result loses one click, but an AI platform citing an untrustworthy source amplifies the error into thousands of generated answers. That asymmetry makes AI systems more conservative about trust than search engines have ever been.

Three shifts have made trust the dominant factor in search visibility. AI platforms need permission to cite you. Semrush's practical audit of AI search trust signals frames credibility through three categories entity identity, evidence and citations, and technical health and notes that trust signals are what make inclusion in AI results possible at all. E-E-A-T enforcement has tightened. Core updates in 2025 and 2026 have rewarded sites with verifiable expertise while demoting those that rely on thin content or manufactured credentials. AI-generated content has flooded the web. Search engines and AI models both need stronger trust signals to separate genuine expertise from machine-generated filler.

Trust is a composite of signals that search engines and AI models evaluate together: entity clarity (who you are, verifiable across platforms), third-party validation (who vouches for you), content accuracy (whether claims are verifiable and sourced), technical reliability (security, performance, transparency), and author credibility (whether the people behind your content have demonstrable expertise). AI platforms weight these dimensions differently in practice. Perplexity leans heavily on cited primary sources and review-style content. ChatGPT trusts what the broader internet agrees on. Gemini leans on what your brand says about itself, cross-referenced with external validation. Building trust for all of them means covering every category, not optimising for one.

Seven practical steps build the kind of trust both Google and AI platforms reward.

1. Demonstrate Real Experience

Google's first E in E-E-A-T stands for Experience, and it is the signal that separates genuine expertise from recycled advice. Show that the people behind your content have actually done the work. Publish case studies with concrete outcomes and timelines. Share what did not work alongside what did, because acknowledging trade-offs and failures is concrete evidence of firsthand involvement that recycled content cannot fake. Include original photos, screenshots, and data that could only come from direct experience.

2. Build Author Credibility

Every piece of content should have a named author with a dedicated bio page including professional credentials, links to external publications, and evidence of expertise in the subject matter. Anonymous content is a trust penalty in 2026 both Google and AI platforms discount it. Link author profiles to LinkedIn and any external validation. The goal is to make it trivially easy for a search engine or AI model to verify that the person giving advice is qualified to give it.

3. Implement Technical Trust Signals

HTTPS is the bare minimum. In 2026, Google evaluates security headers, mixed content warnings, and overall site reliability as part of its trust assessment. Core Web Vitals directly affect how trustworthy your site appears: keep LCP under 2.5 seconds, eliminate CLS, and ensure interactions respond within 200ms. A site that loads slowly or shifts unexpectedly signals unreliability to both users and algorithms.

4. Earn Third-Party Validation

The most powerful trust signal is what other credible sources say about you. Editorial backlinks from respected publications, brand mentions in industry reports, and citations in academic or professional content all contribute to the trust search engines and AI platforms assign to your domain. Focus on earning mentions rather than building links digital PR, original research, and expert contributions to industry publications generate the kind of third-party validation that moves the needle. This connects directly to the backlink engine covered next.

5. Be Transparent About Who You Are

Trustworthy websites make it easy to find out who runs them, where they are based, and how to contact them. A detailed About page, visible contact information, a clear privacy policy, and accessible terms of service are baseline requirements. When these are missing or buried, both search engines and AI models interpret it as a trust deficit. For YMYL (Your Money or Your Life) categories health, finance, legal, safety transparency requirements are even higher.

6. Publish Accurate, Well-Sourced Content

Every factual claim on your site should be verifiable. Link to primary sources when citing statistics. Update content when facts change. AI platforms are especially sensitive to accuracy because they amplify whatever they cite a wrong number on your page becomes a wrong number in thousands of AI-generated answers. Content that earns citations from AI platforms is content that models can cite without risk: clear statements, specific data points, and consistent accuracy across the entire site.

7. Maintain Entity Consistency Across Platforms

AI models build trust profiles by aggregating signals across the entire web. If your brand name, description, or key facts are inconsistent between your website, Google Business Profile, social media, and third-party directories, it creates uncertainty and uncertainty is the opposite of trust. Audit your brand presence across every platform where you appear and ensure entity information name, description, location, contact details, service descriptions is consistent and current.

The Backlink Engine That Powers All Three Layers

Backlinks are the connective tissue that flows through every layer of the authority stack. Google's own link spam policies make clear that the company still actively polices link manipulation, which is only meaningful because links continue to carry ranking weight. Industry analyses of ChatGPT citations consistently find that sites with deeper referring-domain profiles are materially more likely to be cited than sites with thin link profiles. Most backlink advice is either outdated or too vague to act on. Seven tactics work right now, with a focus on earning links that improve both your search rankings and your AI visibility.

1. Publish Original Research and Data Studies

Original research is the single most effective link magnet. When you publish proprietary data, industry surveys, or analysis that others cannot find elsewhere, journalists, bloggers, and content creators link to your study as their source. The key is specificity: "we surveyed 500 marketers about their 2026 priorities" earns far more links than a generic opinion piece. Data-led pieces win because they offer something quotable that other writers cannot easily reproduce, and every quote in a downstream article is another opportunity for a backlink. Start with the data you already have, because internal metrics and customer patterns are unique to you, and uniqueness is what makes content linkable.

2. Use Digital PR to Earn Media Coverage

Digital PR creates backlinks through news coverage, expert quotes, and industry reports. Platforms like Qwoted, Featured.com (which acquired the HARO brand from Cision in 2025 after Connectively was shut down in December 2024), and Muck Rack connect you with journalists actively seeking expert sources. When a reporter uses your quote, they typically link back. One well-placed quote in a high-authority publication can be worth dozens of lower-quality links. The AI visibility angle matters here too: when reputable news sites mention and link to your brand, AI models that train on web content absorb that association.

3. Write Guest Posts on Niche-Relevant Sites

Guest posting still works, but only when done right. Writing for sites in your niche with real audiences earns contextual backlinks that both search engines and AI agents value highly. Focus on quality over quantity. One guest post on an industry-leading blog with 50,000 monthly readers delivers more value than ten posts on obscure sites with no traffic. Read their existing content carefully before pitching, and offer a topic they have not covered yet or a fresh angle on a subject their audience cares about.

4. Find and Fix Broken Links on Other Sites

Broken link building is one of the most reliable and relationship-friendly tactics available. Find pages in your niche that link to resources that no longer exist (404 errors), create content that serves as a replacement, and reach out to the site owner with a helpful suggestion. Tools like Ahrefs, Semrush, and Check My Links (a free Chrome extension) make finding broken links straightforward. The key is providing genuine value you are helping the webmaster fix their site, not just asking for a link.

5. Reclaim Unlinked Brand Mentions

If someone mentions your brand online without linking to you, that is the easiest backlink opportunity you will ever find. The writer already knows your brand they simply did not include a link. Set up alerts through Google Alerts, Mention, or BrandMentions a structured workflow for tracking and reclaiming brand mentions keeps the process repeatable and when you find an unlinked mention reach out to the author with a polite request to add a link. Conversion rates are significantly higher than cold outreach because the relationship already exists. For most businesses, unlinked mention reclamation is the fastest path to new backlinks with the least effort.

6. Build Free Tools and Interactive Resources

Free tools, calculators, and interactive resources attract backlinks continuously because they provide ongoing utility. A mortgage calculator, an SEO audit tool, or an industry benchmark generates links every time someone references it. The investment is higher upfront, but the return compounds. Unlike a blog post that earns most of its links in the first few weeks, a useful tool keeps attracting links for months or years and tools that people consistently reference become the resources AI agents recommend.

7. Pitch Your Content to Resource Pages

Resource pages curated lists of helpful links on a specific topic exist in almost every industry. Universities, industry associations, and professional organisations maintain these pages, and getting listed earns high-authority backlinks. Search for phrases like "useful resources" + [your topic], "helpful links" + [your industry], or inurl:resources + [your keyword] to find relevant pages, then reach out with a concise pitch explaining exactly which section your resource fits into and why their audience would benefit.

What to Avoid: Tactics That Will Hurt You

Buying links, participating in link exchange schemes, or using automated tools to generate hundreds of low-quality links violates Google's link spam policies and can result in manual penalties that devastate your rankings. In the AI search era, the risk is compounded a site penalised by Google sends negative trust signals to every AI platform that uses search data. Private blog networks (PBNs), link exchanges, and mass directory submissions are not shortcuts; they are accelerants for the kind of link profile that suppresses every authority signal you have built.

Protecting Authority: How to Handle Toxic Backlinks

Not every link pointing to your website is helping it. Some are actively dragging it down or at the very least, creating risk you have not accounted for. These are toxic backlinks, and understanding them is the difference between a clean link profile and one that triggers a Google manual action or quietly suppresses your AI citation rate.

A toxic backlink is an incoming link from a source that violates Google's link spam policies or exhibits patterns associated with manipulation rather than genuine editorial endorsement. The key word is pattern. A single low-quality link rarely causes damage Google's systems are sophisticated enough to ignore isolated spam links. The problem arises when your backlink profile contains clusters of manipulative links suggesting that someone (you, a previous SEO agency, or a competitor running a negative SEO campaign) deliberately built them.

Common sources include link farms and private blog networks (PBNs) sites created solely to sell or exchange links, with thin content and dense interlinking; mass directory submissions to low-quality, irrelevant foreign-language directories; comment and forum spam; links injected into hacked sites through security vulnerabilities; paid links without rel="nofollow" or rel="sponsored"; and excessive exact-match anchor text. If 40% of your backlinks use the exact keyword phrase you are trying to rank for, that pattern screams manipulation. Natural link profiles have diverse, often branded or generic anchor text.

Google's position has evolved. Today, Google claims to ignore most bad links rather than penalising sites for them but "most" is doing a lot of heavy lifting. Manual actions for unnatural inbound links still exist. And while algorithmic penalties are less common than a decade ago, sites with manipulative link patterns still experience ranking suppression it just happens more quietly now, without an explicit notification. The practical risk has three forms: manual actions that demote or remove your pages from search results, algorithmic devaluation by SpamBrain that quietly stops rankings improving, and AI visibility damage as the downstream authority signals AI models rely on weaken.

Finding Toxic Backlinks

Google Search Console (free) is where to start. The Links report shows your top linking sites, top linked pages, and top linking text. It will not label links as "toxic," but it gives you the raw data to spot obvious problems: linking domains you do not recognise that appear hundreds of times, anchor text patterns that are suspiciously keyword-rich, and sudden spikes in linking domains. Search Console also shows any manual actions under Security & Manual Actions. If you have one for "Unnatural links to your site," you have a confirmed toxic backlink problem.

Semrush's Backlink Audit tool is the most widely used paid option. It assigns a toxicity score to each link based on a wide set of markers, including link source quality, anchor text distribution, link placement and context, and network patterns, and lets you build a disavow file directly. It tends to flag aggressively, so manual review is essential, but it is the fastest way to surface candidates.

Ahrefs takes a more cautious approach. They explicitly state that they do not provide a "toxic link score" because they believe the concept is often oversimplified. Instead, Ahrefs gives detailed data about every backlink referring domain rating, traffic, anchor text, link type and lets you make your own judgement. Filter the Backlinks report by DR 0-10, one backlink per domain, and dofollow only.

Regardless of which tool you use, every flagged link should pass through a manual check.

| Signal | What to Look For |

|---|---|

| Relevance | Is the linking site in your industry or a related field? |

| Content quality | Does the page have real content, or is it thin/auto-generated? |

| Traffic | Does the site have any organic traffic? (Zero traffic = likely spam) |

| Link context | Is your link within editorial content or buried in a sidebar/footer? |

| Anchor text | Is the anchor natural, or is it an exact-match keyword phrase? |

| Link neighbourhood | What other sites does this page link to? If they are all casinos and pharma, run |

Removing Toxic Backlinks

Once you have identified genuinely toxic links, you have two options.

Contact the webmaster. This is the preferred method. Find the site owner's contact information and request removal include the exact URL of the page containing the link, the anchor text, and your target URL. Reality check: response rates for link removal requests are typically below 10%. Most spam sites have no functioning contact information. This step is worth attempting for legitimate sites that may have been compromised, but do not expect it to solve the problem entirely.

Disavow through Google. The Disavow Tool tells Google to ignore specific links or entire domains when assessing your site.

When to disavow: you have an active manual action for unnatural links; you have identified a large pattern of manipulative links (hundreds or thousands from PBNs, link farms, or spam networks); you used a link building service in the past that you now know was violating guidelines.

When NOT to disavow: a tool flagged a few dozen links as "potentially toxic" but you have no manual action and no ranking problems. Google's own documentation states that "in most cases, Google can assess which links to trust without additional guidance." The links are simply from low-authority sites low authority is not the same as toxic. Or you are doing it "just to be safe" disavowing legitimate links can actually hurt your rankings by removing valid signals.

The AI Visibility Dimension

The three layers and the backlink engine converge on one outcome: AI citation likelihood. AI search engines do not count backlinks directly. They do not run Moz's page authority calculation. What they do is inherit the downstream signals domain trust, editorial mention frequency, knowledge graph presence, entity consistency, content extractability that compound from the authority stack covered above. A site with a clean, editorially-earned link profile builds stronger signals across all of those dimensions. A site associated with link spam neighbourhoods builds weaker ones, even if Google is currently ignoring those links.

The compounding effect runs deeper than most teams realise. Pages with strong authority rank higher in traditional search, which increases their visibility to AI crawlers, which increases their likelihood of being included in AI training data and retrieval indexes, which further reinforces their authority. The brands winning in both channels treat every important page as its own authority-building project not a piece of content to publish and forget, but an asset that earns links, gets updated, and stays optimised for both human readers and AI extraction.

Your 30-Day Authority Action Plan

Authority compounds slowly. Thirty days will not transform your domain, but it is enough to make the highest-leverage moves from each layer and set the system in motion.

Week 1 Audit the foundation. Run a structured AI visibility audit alongside the link review so authority gaps and citation gaps surface together. Export your backlinks from Google Search Console (Links > Top linking sites > Export). Sort by linking pages count and check the top 20 unfamiliar domains manually. Run a Semrush or Ahrefs audit if you have access. Note any patterns of manipulative links but only plan to disavow if you find a clear pattern, not because a tool gave a few links a red score. At the same time, audit your top 20 revenue-driving pages: which have the most referring domains, which are losing rankings, and which lack a clear topical cluster around them.

Week 2 Fix the trust layer. Add author bios with verifiable credentials to every content page. Make sure your About, Contact, Privacy, and Terms pages are detailed and accessible. Audit entity consistency across Google Business Profile, social profiles, and third-party directories fix any mismatches in name, description, location, and contact details. Implement Organization, Article, and FAQ schema if they are missing.

Week 3 Strengthen page authority on priority pages. Pick the three highest-priority pages from week one. Add 5+ contextual internal links to each from your highest-authority existing content. Update each page with original data, expert commentary, or a unique framework that differentiates it from competing pages. Tighten title tags and meta descriptions for click-through rate. Run them through Core Web Vitals and fix any LCP, INP, or CLS issues.

Week 4 Start the backlink engine. Pick one of the seven tactics covered earlier and commit to it for the next 90 days. For most teams, the highest-leverage move is original research: take an internal data point you already have, package it into a clear, well-structured study, and pitch it through digital PR. Pair that with an unlinked brand mention reclamation pass set up alerts, find existing mentions, and request links. These two tactics together produce the editorial backlinks that move every layer of the authority stack at once.

Frequently Asked Questions

Do backlinks still matter for SEO and AI visibility in 2026?

Yes. Google has confirmed that backlinks remain a top ranking factor, and their importance extends to AI search. Industry analyses of AI citation patterns consistently find domain-level authority built largely through backlinks to be among the strongest predictors of whether AI platforms cite a site. AI engines do not count backlinks directly, but they inherit the downstream authority signals that backlinks build.

What is the most effective single move to build authority?

Publishing original research with proprietary data. AI models cannot fabricate data, so they cite sources that provide unique, verifiable facts. Even small-scale original research internal metrics, customer surveys, or industry observations can drive more AI citations than dozens of articles that summarise existing information. A single proprietary data point, written up clearly with the methodology disclosed, will continue to attract editorial backlinks and AI citations long after a how-to article on the same topic has stopped being referenced.

What is the difference between page authority and domain authority?

Domain authority is the aggregate trust of an entire website. Page authority is the ranking strength of a single URL. Domain authority sets the ceiling, but page authority determines which of your pages actually reaches it. Google ranks individual URLs and AI cites individual pages, not domains. A page on a DA 30 site can outrank a page on a DA 70 site if its individual authority signals are stronger.

Is it safe to buy backlinks?

No. Buying links, participating in link exchange schemes, or using automated tools to generate low-quality links violates Google's link spam policies and can result in manual penalties. In the AI era, the risk is compounded a penalised site sends negative trust signals to every AI platform that uses search data.

Do toxic backlinks affect AI search visibility, and when should I disavow?

AI search engines do not count backlinks directly, but a polluted link profile weakens the downstream authority signals AI models rely on lower domain authority, fewer genuine editorial mentions, weaker knowledge graph presence. Only use Google's Disavow Tool when you have an active manual action, you have identified a large pattern of manipulative links (hundreds or thousands from PBNs and link farms), or you used a link building service that you now know was violating guidelines. Do not disavow because a tool flagged a few dozen links as "potentially toxic" Google's own documentation states that in most cases Google can assess which links to trust without additional guidance.

How long does it take to build website authority?

Authority is a compounding asset that builds over months, not days. Most businesses see measurable improvements in search rankings within three to six months of sustained effort, with AI citation gains following as authority signals accumulate. Page-level authority moves faster than domain authority because the levers internal links, content updates, targeted link building are within direct control.

What is the difference between trust and authority, and how do AI platforms evaluate trust?

Authority measures how much weight your content carries domain strength, backlink profile, topical coverage. Trust measures whether your content is safe to rely on accuracy, transparency, verifiable credentials, consistent entity signals. AI platforms will pass over an authoritative source if trust signals are missing. Perplexity prioritises expert citations and customer reviews. ChatGPT trusts consensus across multiple trusted sources. Gemini cross-references what a brand says about itself with external validation. Building trust for AI visibility means covering all these dimensions, not optimising for one platform.

Can a small business compete on authority against larger competitors?

Yes, particularly through topical authority. A small business that deeply covers a specific niche with interconnected content clusters can outperform larger competitors with broader but shallower coverage. AI models evaluate expertise at the topic level, so a focused authority strategy in a defined domain is more effective than competing across many topics simultaneously.

To see how AI platforms currently evaluate your website's authority, run a free AI readiness scan for your AI Readiness Score in 30 seconds, or explore the full AI Readiness Audit for cross-platform research that tests citation, trust, and authority signals across nine AI platforms.