Every month, marketing teams open their reports and see the same metrics they have tracked for a decade. Rankings. Traffic. Click-through rate. Backlinks. The numbers look fine. The dashboards are green. Meanwhile, millions of potential customers are asking ChatGPT, Perplexity, Gemini, and Claude for recommendations in your industry and your brand is nowhere in the answer.

That disconnect is not a minor reporting gap. It is the single biggest blind spot in modern marketing measurement. The metrics that mattered two years ago cannot tell you whether AI models discover your brand, trust your content, or cite you when a potential customer is forming an opinion. In 2026, content performance is a two-layer problem: the traditional engagement layer most dashboards already track, and the AI visibility layer most dashboards still ignore.

This guide is the full picture which traditional metrics still matter, which AI-native metrics your reports are missing, how AI models actually learn about your brand, and how to build a measurement stack that captures both layers instead of just one.

Key Takeaways

- Traditional SEO metrics measure one discovery channel. AI-assisted discovery happens in a separate, fast-growing channel that rankings, traffic, and CTR are structurally blind to.

- Nearly 60% of Google searches now end without a click, and Google's AI Overviews peaked at around 25% of all searches in mid-2025 before pulling back to roughly 15% by late 2025 the click-based measurement world is shrinking while AI answers grow.

- Branded web mentions correlate with AI Overview appearances at 0.664, far higher than backlinks at 0.218, according to an Ahrefs study of 75,000 brands what others say about you matters more than what you say about yourself.

- Almost 90% of ChatGPT citations come from pages ranking position 21 or lower, meaning your traditional rank tracking misses the pages AI models actually use.

- Only 30% of brands maintain consistent visibility across consecutive AI answers (AirOps 2026 research), making AI share of voice a volatile, high-stakes competitive metric.

- High-traffic page mentions compound across training snapshots and retrieval indexes brands earning them early build an advantage late movers cannot easily replicate.

The Reporting Blind Spot

Consider what a standard SEO report measures. It tells you where your pages rank in Google, how much traffic those rankings generate, which keywords drive clicks, and how your domain authority compares to competitors. All of this is useful. None of it captures what happens when a user bypasses search engines entirely and asks an AI assistant instead.

This is not a niche behaviour. ChatGPT has surpassed 800 million weekly active users, and as of February 2026 OpenAI confirmed the figure has climbed to 900 million. Google's AI Overviews now appear on roughly 15% to 25% of all searches depending on the period, with informational queries seeing the highest exposure rate. Perplexity, Claude, and Gemini are processing millions of queries daily. Research from Semrush indicates that LLM traffic is on track to overtake traditional Google search volume by 2028.

Yet the reporting infrastructure most businesses rely on was designed for a world where discovery happened through ten blue links. The blind spot is not that SEO reports are wrong it is that they are incomplete. They measure one discovery channel while another, faster-growing channel operates entirely outside their field of vision.

Three specific gaps explain why traditional metrics miss AI visibility:

Rankings measure position, not presence. Ranking first on Google for a keyword tells you nothing about whether ChatGPT mentions your brand when a user asks about the same topic. AI models pull from training data, retrieval systems, and real-time search and their source selection logic is fundamentally different from Google's ranking algorithm.

Traffic attribution breaks down. When a user asks Perplexity for a recommendation, visits your website directly afterwards, and converts, your analytics attributes that visit to "direct" traffic. The AI-assisted discovery step is invisible. Your SEO report shows a direct visit. Your paid team claims it was brand awareness. Nobody credits the AI mention that actually drove the decision.

Crawl and index status is insufficient. Traditional SEO tracks whether your pages are indexed by Google. But being indexed by Google is not the same as being discoverable by AI models. LLM visibility depends on training data presence, retrieval-augmented generation access, and content structure three dimensions that Google indexing does not guarantee.

Why CTR Alone No Longer Tells the Full Story

CTR measures one thing: whether someone clicked your search result. It was a reliable signal when search engines presented ten blue links and users picked one. That world is shrinking fast.

Google's AI Overviews appear on a meaningful share of all searches, answering questions directly inside the results page. The exact share has fluctuated through 2025 between 15% and 25% as Google has tuned the feature, but the trajectory is clear. In these environments, your brand's value is measured by whether it appears in the AI-generated answer not by whether someone clicks through to your website.

This does not mean CTR is irrelevant. Transactional and brand-specific queries still drive clicks. But for the informational and research queries that shape purchase decisions "best CRM for small teams", "how to improve website security", "top accounting software" AI platforms increasingly deliver answers without sending traffic. If your measurement stops at CTR, you are blind to an entire channel of brand exposure.

The shift requires new metrics that measure whether AI systems know your brand exists, trust it enough to cite, and include it when generating relevant answers.

The Traditional Metrics That Still Matter Read Through an AI Lens

Before layering on AI-native metrics, it is worth being honest about which traditional metrics still pull their weight. Several do but their role has changed. They are no longer the whole story; they are the foundation AI visibility is built on.

Organic traffic remains the foundation of content performance measurement. A page with growing organic traffic is a page that search engines consider relevant and authoritative and pages with strong organic traffic also tend to receive more mentions from AI search engines, making this metric doubly important. Track organic traffic per page, not just site-wide. Google Analytics 4 lets you filter by landing page and traffic source so you can see exactly which articles pull their weight. Watch for sudden drops on specific pages they often signal a ranking loss, a technical issue, or a competitor publishing a stronger piece.

Keyword rankings still show where your content appears in search results for specific queries. Pages ranking one through three capture the majority of clicks in the click-based channel. Track both your primary keywords and long-tail variations in Google Search Console pages ranking for dozens of related queries signal strong topical authority, exactly the kind of signal AI models weigh when deciding which sources to cite.

Click-through rate (CTR) matters most for transactional and brand-specific queries. According to Advanced Web Ranking's CTR study, CTR for position one on Google typically lands in the 25 to 35 percent range on desktop, falling to roughly 8 to 15 percent at position three (with significant variance by industry, query type, and SERP features). If your page ranks in the top three but gets a CTR well below these benchmarks, rewriting your title and description is one of the highest-leverage improvements you can make and a stronger headline also increases the chance that AI systems surface your page in a summary.

Engagement time, GA4's replacement for average session duration, filters out tabs left open in the background and measures actual interaction. Short engagement times paired with high traffic suggest the content attracts clicks but fails to deliver on the headline's promise. Engagement time also correlates with the comprehensive, in-depth coverage that AI engines prefer to cite.

Bounce rate in GA4 is the inverse of engagement rate a session counts as engaged if the user stays more than 10 seconds, views multiple pages, or triggers a conversion event. A high bounce rate is not automatically bad. A blog post that fully answers a reader's question in a single visit may bounce and still perform well. Compare bounce rates across similar content types rather than judging them in isolation.

Conversion rate measures whether your content drives the action you want. Traffic and engagement are means to an end. You may find that a blog post with modest traffic converts at three times the rate of your highest-traffic page that insight shifts your strategy toward the content type that converts, not just the type that attracts.

Backlinks remain one of the strongest ranking factors in traditional search, and research from Backlinko shows a strong correlation between the number of referring domains and higher rankings. Beyond SEO, backlinks from authoritative sites also influence how AI models perceive your content. AI training data and retrieval systems give more weight to pages that are frequently referenced across the web. A page with 50 quality backlinks is far more likely to appear in AI-generated answers than a page with none. You can learn more about building quality backlinks as part of your content strategy.

These seven metrics are the foundation. But the foundation is not the building. The rest of this guide covers the layer that sits on top of it.

The AI-Native Metrics Most Dashboards Miss

These metrics require a fundamentally different measurement approach. You cannot pull them from Google Search Console or Google Analytics. They require querying AI platforms directly, comparing responses over time, and benchmarking against competitors.

1. AI Citation Frequency

AI citation frequency measures how often AI platforms explicitly reference your brand or link to your content when answering relevant queries. This is the most direct signal of AI visibility either the platform names you as a source, or it does not.

An Ahrefs study of 75,000 brands shows that branded web mentions have a 0.664 correlation with AI Overview appearances far stronger than backlinks at 0.218 or domain authority alone. The implication is clear: the more your brand is mentioned across the web in authoritative contexts, the more likely AI platforms are to surface you when users ask. Building a systematic citation strategy is the lever that turns scattered web mentions into consistent AI citations.

Tracking citation frequency means querying multiple AI platforms with relevant prompts and measuring how often your brand appears in the responses. SwingIntel's AI Readiness Audit does exactly this querying 9 AI platforms with thousands of AI queries across 12 categories to measure your real citation frequency, not an estimate.

2. Discoverability Rate

Discoverability rate answers a harder question than citation frequency: does AI mention your brand when no one asks about you by name?

When a user asks ChatGPT "what is the best project management tool for remote teams", the AI draws from its training data and live search to generate an answer. If your project management tool appears in that response without the user ever mentioning your brand name, you have earned organic AI discoverability. If it does not, you are invisible at the exact moment a potential customer is forming an opinion.

This metric requires testing with unbranded, category-level prompts the kind of questions your target customers actually ask. It is the hardest metric to score well on, because the AI must independently associate your brand with the relevant category. Brands with strong entity authority, consistent web presence, and structured data tend to score highest.

3. AI Share of Voice

AI share of voice measures the percentage of relevant AI-generated answers that mention your brand compared to your competitors. If your competitor appears in 7 out of 10 answers and you appear in 2, your share of voice is 20% versus their 70%.

This metric reveals competitive dynamics that traditional SEO metrics miss entirely. You might outrank a competitor on Google for a given keyword, but if their brand dominates AI answers for the broader category, they are shaping purchase decisions before a customer ever reaches a search results page.

AirOps' 2026 research found that only 30% of brands maintain consistent visibility across consecutive AI answers. AI share of voice is volatile a competitor publishing a well-structured, frequently-cited article can shift the balance in weeks. Monitoring it regularly is not optional.

4. AI Brand Sentiment

Brand sentiment in AI is not the same as sentiment on social media. It measures how positively, neutrally, or negatively AI platforms describe your brand when they do mention it.

AI models synthesise opinions from across the web reviews, articles, forum discussions, news coverage. If your brand is consistently associated with trust, expertise, and reliability in these sources, AI-generated answers will reflect that. If the web contains unaddressed complaints or negative coverage, the AI will surface that too.

This metric matters because AI answers carry implicit authority. When ChatGPT says "Brand X is known for reliable customer support", that shapes perception more powerfully than a single review on a comparison site. Understanding what AI says about you and working to improve the underlying signals is a direct business lever.

5. Content Grounding Score

Content grounding score measures how much of your website's content AI search systems actually absorb and use when generating responses. It is one thing for an AI to know your brand exists. It is another for it to draw on your specific content when constructing answers.

This metric evaluates whether AI platforms pull facts, data, and insights from your pages or whether they know your brand name but source their actual content from competitors. A high content grounding score means your website is functioning as a primary source of truth for AI systems in your category.

The factors that drive content grounding are specific: well-structured headings, clear factual statements, schema markup that identifies entities and relationships, and content freshness. Stale pages that are not regularly updated tend to lose AI citations over time, while pages with sequential headings and rich schema earn citations at meaningfully higher rates.

6. Entity Authority

Entity authority measures how well AI platforms understand your brand as a distinct entity not just a keyword match, but a recognised entity with clear attributes, relationships, and category associations.

When Google's Knowledge Graph, Wikidata, or other entity databases contain structured information about your brand, AI systems can confidently reference you in answers. Without clear entity signals across the web, AI platforms may confuse your brand with similarly named entities, misattribute your products, or simply omit you from answers where you belong.

Building entity authority requires consistent NAP data (name, address, phone) across directories, structured data on your website Organization schema, Product schema, FAQ schema presence in knowledge bases, and a clear, consistent brand narrative across the web. This is foundational work. Without it, the other five metrics remain artificially capped.

LLM Visibility as the Composite: What a Complete Report Contains

The six metrics above are components. LLM visibility is the composite view how AI models discover, reference, and recommend your brand as a whole. If you were to add an LLM visibility section to your monthly reporting, here is what it should contain:

Citation rate across platforms. How often do LLMs cite your website as a source when answering queries in your domain? Critically, almost 90% of ChatGPT's citations come from pages ranking in position 21 or lower not the top-five results traditional SEO obsesses over. A page your SEO report ignores might be your most valuable AI asset.

Mention frequency. When users ask AI models about your industry, product category, or specific use case, does your brand appear? Not as a link to click, but as a named entity the model considers relevant. Research from AirOps, analysing more than 21,000 brand mentions across major AI systems, shows that 85% of brand mentions in AI answers originate from third-party sources. What others say about you shapes your AI visibility more than what you say about yourself.

Recommendation share. In competitive queries ("best project management tool for remote teams"), what percentage of AI responses include your brand? Top-performing brands capture 15% or more share across their core query sets. This is the AI equivalent of share of voice, and traditional SEO cannot capture it.

Sentiment and framing. LLMs do not just mention brands they characterise them. An AI model might describe one competitor as "enterprise-grade" and another as "best for beginners," shaping user perception before they ever visit a website. LLM perception drift the gradual shift in how models represent your brand can change your market positioning without any change to your actual product.

Citation source analysis. When AI models do cite your brand, which pages are they pulling from? This often reveals surprising results a three-year-old comparison page or an industry report might be your most-cited asset, while your carefully optimised homepage contributes nothing to AI visibility.

Perception accuracy audit. Periodically check how AI models describe your brand. Are the descriptions accurate? Do they reflect your current positioning? LLM perception drift means the AI's understanding of your brand can quietly fall out of sync with reality.

Training data footprint. Measure your presence in the datasets AI models learn from. Common Crawl inclusion, structured data coverage, and knowledge graph presence all contribute to whether models have baseline awareness of your brand before any real-time retrieval occurs.

At minimum, monitor ChatGPT, Google Gemini, Perplexity, and Claude these represent the highest-traffic AI discovery channels. For comprehensive coverage, also include Google AI Overviews, Microsoft Copilot, Grok, DeepSeek, and Meta AI. Each platform has different source preferences and citation behaviour, so monitoring across all major providers gives the most complete picture.

AI Overview Appearances and Content Freshness

Two more signals deserve their own section both sit at the intersection of traditional and AI measurement.

AI Overview appearances. Google AI Overviews the AI-generated summaries that appear above organic results now show up for a growing share of search queries. When your content appears as a source in an AI Overview, it gets visibility that no organic ranking position can match. Track which of your pages appear in AI Overviews and for which keywords. This metric tells you whether Google's AI considers your content authoritative enough to summarise and cite. Pages that earn AI Overview placement tend to have clear structure, specific factual claims, and strong schema markup. You can check your current AI Overview visibility with a free AI readiness scan it takes 30 seconds.

Content freshness and decay. Even your best-performing content will decline over time. Content decay the gradual loss of traffic, rankings, and engagement is inevitable unless you actively maintain and update your pages. Set a quarterly review cycle: identify pages where organic traffic has dropped more than 20% from their peak. These are candidates for a content refresh updating statistics, adding new sections, improving internal linking, and ensuring the information is still accurate. Fresh, up-to-date content performs better in both traditional search and AI citation, because AI engines prioritise recency and accuracy when selecting sources to cite.

How AI Actually Learns About Brands

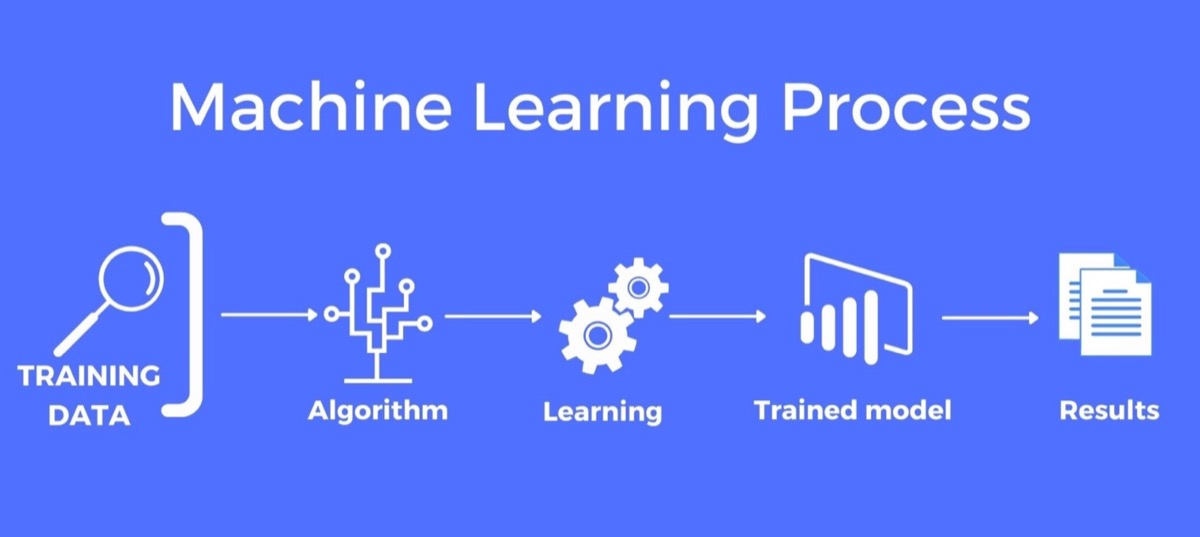

If you want to improve AI visibility metrics instead of just watching them, you need to understand the mechanism that produces them. Large language models acquire knowledge about brands through two distinct channels, and both have implications for how you should think about measurement.

Training data frequency. LLMs like GPT-4 and Claude are trained on massive web crawls, primarily sourced from Common Crawl and similar datasets. These crawls do not treat all pages equally. Pages with more inbound links, higher traffic, and greater crawl frequency appear more often in training datasets. When your brand is mentioned on a page that Common Crawl indexes repeatedly, that mention gets reinforced across multiple snapshots of the training data.

A mention on a low-traffic personal blog might appear once. A mention on a high-traffic industry site might appear in dozens of crawl snapshots spanning years. This repetition matters. LLMs learn through pattern frequency the more often a brand appears in a specific context during training, the stronger the association becomes in the model's parameters. Being mentioned on TechCrunch or Forbes is not just a PR win. It is literally encoding your brand into the AI's understanding of your industry.

Retrieval source authority. When AI systems need current information, they search the web in real time. ChatGPT uses Bing, Gemini uses Google, and Perplexity uses its own index. These retrieval systems apply authority signals when selecting which pages to surface and traffic volume is a proxy for those signals. High-traffic pages rank higher in retrieval results, which means mentions of your brand on those pages are more likely to be pulled into AI-generated answers.

This creates a compounding effect. A brand mentioned on authoritative, high-traffic pages gets encoded in training data and prioritised in real-time retrieval. Both channels reinforce each other. Understanding how ChatGPT sources the web reveals just how central page authority is to this process.

Why High-Traffic Page Mentions Dominate the Numbers

Several data points connect page traffic directly to AI mention rates:

Common Crawl representation is skewed toward popular sites. Analysis of Common Crawl data shows that the top 10,000 domains by traffic account for a disproportionate share of crawled pages. Sites like Wikipedia, Reddit, major news outlets, and industry publications are crawled far more frequently than niche sites. Any brand mention on these high-traffic domains gets dramatically more exposure in training datasets than equivalent mentions elsewhere.

Retrieval systems favour authoritative sources. When Perplexity or ChatGPT with web browsing retrieves information, they pull from search indexes that weight page authority heavily. A brand mentioned in a Forbes article ranks higher in retrieval results than the same brand mentioned on an obscure blog even if the blog article is more detailed and accurate. This means high-traffic page mentions directly translate to higher retrieval probability.

Entity recognition strengthens with cross-source mentions. AI models build internal entity maps associations between brands, products, industries, and concepts. When your brand appears across multiple high-traffic sources, the model develops a stronger entity representation. This is why some brands get chosen by AI engines while competitors with similar products remain invisible.

LLM Mentions data confirms the pattern. LLM Mentions tracking, which measures how often AI platforms reference specific brands in their responses, shows a clear correlation between a brand's presence on high-traffic, authoritative pages and its frequency of AI mentions. Brands with mentions concentrated on low-authority pages see measurably fewer AI citations than brands with equivalent total mention counts distributed across high-traffic sources.

Why Not Every Mention Moves the Needle

Raw mention count is misleading. Four factors determine how much a given mention contributes to your AI visibility metrics:

Context determines association. An LLM does not just learn that your brand exists it learns what your brand is associated with. A mention on a high-traffic page about "best project management tools" creates an association between your brand and project management. A mention on a random listicle with 500 other brands creates noise, not signal. The context of the mention shapes what factors determine AI recommendations for your category.

Recency affects retrieval weight. Retrieval systems prioritise recent content. A mention on a high-traffic page published last month carries more weight in real-time retrieval than one published three years ago. Even high-traffic page mentions lose retrieval influence over time if the content is not updated.

Sentiment shapes recommendation probability. AI models do not just register mentions they register sentiment. A positive mention on a high-traffic review site strengthens the likelihood of a favourable AI recommendation. A negative mention on the same site can actively work against you. The AI learns tone and context, not just presence.

Specificity drives citation. Generic brand mentions ("Company X is one of many players in this space") create weaker associations than specific, authoritative mentions ("Company X's approach to neural search indexing sets the standard for the industry"). High-traffic pages that mention your brand with specific claims, data points, or expert context create stronger signals for AI models to cite.

Building Your AI-First Measurement Stack

Tracking these metrics individually is useful, but the real power comes from reading them together. A page with high organic traffic, low engagement time, and zero AI citations tells a different story than a page with moderate traffic, strong engagement, and consistent AI mentions. The first page needs a content overhaul; the second needs promotion.

Here is a practical stack that covers both layers without overwhelming a small team:

Monthly cadence, weekly spot checks. Run a full AI visibility measurement monthly citation frequency, discoverability rate, share of voice, sentiment and do lighter weekly spot checks on your most important queries. AI visibility can shift in weeks; quarterly-only tracking misses the signal.

Brand mention tracking across AI platforms. Query the major AI models (ChatGPT, Perplexity, Gemini, Claude, Google AI, Grok, Copilot, DeepSeek, Meta AI) with your core industry queries and track whether your brand appears. Monitoring across multiple platforms is essential because each model has different training data, retrieval mechanisms, and citation preferences.

Competitive share of AI mentions. Track how your mention frequency compares to your top three competitors across the same query set. This is where the real strategic insight lives. If a competitor consistently appears in AI responses for queries you dominate in traditional search, that gap will compound as AI-powered discovery grows.

Citation source audit. Once a quarter, pull the list of pages AI models cite when referencing your brand. The results often reshape your content strategy the pages you thought were your AI assets are usually not the ones doing the work.

Perception accuracy check. Periodically ask the major AI platforms "what is [your brand] known for?" and record the answer. When the description drifts from how you want to be positioned, that is a signal to audit the public sources the model is learning from.

Traditional engagement layer. Keep tracking organic traffic, rankings, CTR, engagement time, bounce rate, conversions, and backlinks. These feed the AI visibility layer do not drop them just because they are no longer sufficient on their own.

Build a simple dashboard even a spreadsheet works that tracks these metrics monthly for your top 20 pages and top 20 queries. After three months, you will have enough data to see patterns: which content types perform best, which topics earn AI citations, and where your biggest gaps are.

The fastest way to establish your baseline is with a structured AI visibility audit framework. SwingIntel's free scan gives you an instant AI readiness score in 30 seconds a preview of how AI-ready your website is. For the complete picture, including live citation testing across 9 AI platforms, competitive benchmarking, and a strategic roadmap, the AI Readiness Audit delivers expert research with specific recommendations for improving each of the metrics in this guide.

The Compounding Advantage

The brands that understand the connection between AI visibility metrics and the mechanics behind them are building an advantage that will be nearly impossible for late movers to overcome.

Here is why. AI models update their training data periodically but the associations they learn persist across updates. A brand that has been consistently mentioned on high-traffic pages across multiple training data snapshots has a deeply embedded presence in the model's parameters. A competitor starting from scratch would need years of equivalent mentions to catch up and by then, the leading brand has accumulated even more.

This is the same dynamic that makes winning in AI-powered search a winner-take-all game. The brands that invest in measurable AI visibility now are not just improving today's numbers they are securing tomorrow's.

The shift from traditional search to AI-assisted discovery is not coming. It is here. The only question is whether your reporting stack reflects that reality or continues measuring a shrinking portion of how customers actually find businesses.

Your SEO report is not broken. It is just not finished. The metrics in this guide are what complete it.

Frequently Asked Questions

What are AI visibility metrics?

AI visibility metrics measure whether your brand appears in AI-generated answers from platforms like ChatGPT, Perplexity, Google AI Overviews, Gemini, and Claude. Unlike traditional SEO metrics such as CTR and rankings, they track citation frequency, mention rates, share of voice, sentiment, content grounding, and entity authority the signals that determine visibility in a zero-click search environment.

Is CTR still important for SEO in 2026?

CTR remains relevant for transactional and brand-specific queries where users still click through to websites. For informational queries which represent the majority of searches AI platforms increasingly deliver answers without generating clicks. A comprehensive measurement strategy needs both traditional metrics and AI visibility metrics to capture full reach.

How do you measure AI citation frequency?

Measuring AI citation frequency requires querying multiple AI platforms with relevant prompts and recording whether your brand appears in the responses. Manual testing is possible but impractical at scale. SwingIntel automates this across 9 AI platforms with thousands of category-specific AI queries, delivering a quantified citation frequency baseline as part of the AI Readiness Audit.

How often should brands track AI visibility metrics?

Monthly tracking provides enough data to spot trends without overwhelming your team, with weekly spot checks on priority queries. AI visibility can shift quickly a competitor publishing well-structured content or your own content going stale can change citation rates within weeks. Quarterly deep audits combined with monthly monitoring offer the best balance of depth and frequency.

Why might a page rank well on Google but be invisible to AI platforms?

AI search engines evaluate content differently from Google's ranking algorithm. A page might rank for keywords but lack the clear, extractable statements, structured data, and factual density that AI models need to cite it. Research shows that almost 90% of ChatGPT's citations come from pages ranking in position 21 or lower Google indexing is a baseline, not a guarantee of AI visibility.

Do mentions on high-traffic pages directly affect AI citations?

Yes. High-traffic pages appear more frequently in AI training data crawls and rank higher in real-time retrieval indexes. Both channels reinforce each other, creating a compounding effect where mentions on authoritative pages are more likely to be absorbed into AI-generated answers. Context, recency, sentiment, and specificity of those mentions all determine how strongly they influence AI recommendations.

Can I track AI visibility in Google Analytics?

Not directly. Google Analytics cannot attribute visits that originate from AI recommendations, because most AI-assisted discovery appears as direct traffic in your analytics. Tracking AI visibility requires querying AI models directly with relevant prompts and analysing whether your brand appears in the responses. Dedicated AI visibility tools are built specifically for this purpose.

Which AI platforms should I monitor?

At minimum, monitor ChatGPT, Google Gemini, Perplexity, and Claude the highest-traffic AI discovery channels. For comprehensive coverage, also include Google AI Overviews, Microsoft Copilot, Grok, DeepSeek, and Meta AI. Each platform has different source preferences and citation behaviour, so monitoring across all major providers gives the most complete picture.

Your AI visibility starts with knowing where you stand today. Check your current AI visibility and see exactly which signals AI models are using or ignoring when they generate answers about your industry.