Roughly half of all Google searches in the US now trigger an AI-generated answer, and millions of additional queries happen inside ChatGPT, Perplexity, and Claude every day. When someone asks one of these models a question in your category, they either name your brand or they name your competitor. There is no page two in an AI answer. LLM Optimization, commonly abbreviated as LLMO, is the discipline that decides which side of that line you land on.

This guide brings together everything that matters: what LLMO actually is, how LLMs find and choose sources, the technical foundations most sites get wrong, the seven strategies that move brands from invisible to cited, the distribution layer ("LLM seeding") that extends your reach beyond your own domain, and the metrics that tell you whether any of it is working.

Key Takeaways

- LLM Optimization is the practice of structuring your brand's online presence so that large language models can find, understand, and cite your business it targets mention probability and citation accuracy, not ranking positions.

- LLM visibility depends on three pathways: training data presence (Common Crawl inclusion), retrieval-augmented generation (real-time web search by AI models), and citation selection based on factual density, authority, and structural clarity.

- Only about 12% of AI assistant citations rank in Google's top 10, and roughly 80% don't appear in Google's top 100 at all Ahrefs' analysis of 15,000 prompts shows the pages you have optimised for Google are often not the pages AI models actually pull from.

- Front-loading answers in the first 30% of content captures 44.2% of ChatGPT citations according to a study of 3 million ChatGPT responses covered by Search Engine Land, and Seer Interactive's research found brands cited in Google AI Overviews see a 35% lift in organic clicks compared to uncited competitors.

- LLMO complements traditional SEO rather than replacing it Gartner predicts traditional search volume will drop 25% by 2026 as users shift to AI assistants, and most LLMO best practices (clear structure, factual density, schema markup) also improve Google rankings.

What Is LLM Optimization?

LLM Optimization (LLMO) is the practice of structuring your brand's online presence so that large language models can find, understand, and cite your business in their responses. Where traditional SEO targets ranking positions on search engine results pages, LLMO targets mention probability and citation accuracy across AI-generated answers.

The term covers everything from whether your content appears in AI training data to whether retrieval systems can extract useful information from your pages in real time. Other names for the same discipline include Generative Engine Optimization (GEO) and LLM SEO all describe the same goal: making your brand visible to the AI systems that increasingly mediate how people discover products, services, and information.

LLMO is not a replacement for SEO. It is an additional layer that addresses a fundamentally different discovery mechanism. Google Search ranks links. LLMs synthesise answers. The signals that drive each outcome overlap in places, but diverge in critical ways and that divergence is why brands with strong Google rankings can still be completely invisible inside AI responses.

How LLMO Differs From Traditional SEO

Traditional SEO is built around keywords, backlinks, and page authority. You optimise a page to rank for a specific query, and success means appearing on the first page of search results. The user clicks your link, lands on your site, and engages with your content.

LLMO works differently. An AI model does not return a list of links it generates a response. Your brand either appears within that response as a recommendation, a citation, or a named comparison, or it does not appear at all. There is no "page two" in an AI-generated answer, and there are no second chances inside a single response.

The practical differences compound:

- Citations, not rankings. The goal is to be cited as a source or recommended by name, not to rank for a keyword position.

- Comprehension over crawling. Traditional SEO optimises for crawlers; LLMO optimises for comprehension. You are not trying to rank a page you are trying to become the answer.

- Semantic clarity over keyword density. LLMs favour content that defines terms clearly, connects related concepts, and presents information in extractable units. Keyword stuffing actively works against you.

- Factual density matters. A landmark study analysing 3 million ChatGPT responses found that 44.2% of citations come from the first 30% of a page, following a "ski ramp" pattern where citation likelihood is highest at the top and drops off sharply. AI models prefer content that leads with the answer.

- Training data presence. Unlike traditional search, LLMs have a knowledge layer built from web crawls like Common Crawl. If your site was not crawled, the model may have no baseline awareness of your brand at all.

- Distributed authority. Brand mentions across trusted third-party sources (Wikipedia, Reddit, industry publications, LinkedIn) now matter more than backlinks for AI citations.

The Three Pathways: How LLMs Find and Use Your Content

Understanding how LLMs source information is the foundation of any optimisation strategy. There are three primary pathways, and a brand needs to be present on all three to compete seriously in AI search.

1. Training data presence. Models like GPT-4, Claude, and Gemini are trained on massive web crawls Common Crawl being the largest public dataset that feeds most LLM training pipelines. If your website was included in those crawls, the model has baseline awareness of your brand. This is the deepest form of LLM visibility because it shapes the model's knowledge even without real-time retrieval. You can check your training data footprint through Common Crawl's CDX index, and sites with a larger footprint have measurably higher brand recall in AI-generated answers.

2. Retrieval-augmented generation (RAG). When a model needs current information, it searches the web in real time. ChatGPT uses Bing, Perplexity uses its own web index, and Gemini taps Google Search. Your site must be crawlable by these retrieval systems and structured so they can extract relevant passages quickly. If your site challenges bots with CAPTCHAs, JavaScript walls, or aggressive rate limiting, the LLM will cite a competitor instead.

3. Citation selection. Even when an LLM retrieves your content, it decides whether to cite you based on factual density, source authority, content freshness, and structural clarity. LLM visibility correlates more closely with semantic precision and structural clarity than with traditional authority signals like raw backlink counts. Otterly.ai's AI Citations Report, which analysed over one million data points, found that community platforms (Reddit, Quora) capture roughly 52.5% of citations versus 47.5% for brand domains a gap that shrinks only for brands that actively optimise for how LLMs consume content.

Why Google Rankings Don't Translate to AI Citations

Here is the data point that should shift your strategy: only about 12% of AI citations rank in Google's top 10, and roughly 80% don't appear anywhere in Google's top 100 for the original query. Community sources like Reddit and Wikipedia receive more citations than official brand marketing pages.

This means the pages you have spent years optimising for Google may not be the pages AI models are pulling from. LLMs have their own retrieval logic, and it rewards a different set of signals:

- Structured, extractable content tables, lists, labelled sections, clear headings

- Third-party validation mentions on review platforms, industry publications, and community forums

- Consistent repetition the same brand associated with the same topics across multiple independent sources

- Freshness recently published or updated content, especially for evolving topics

If your entire content strategy lives on your own domain and targets Google's ranking algorithm, you are optimising for one channel while the fastest-growing discovery channel goes unserved. Understanding what makes AI engines choose certain brands is the first step toward a strategy that works across both traditional and AI search.

The LLMO Foundation: Technical and Structural Setup

Before tactics, the plumbing. Most brands that fail at LLMO fail here not because their content is weak, but because their site is actively excluding itself from AI discovery at the technical layer.

Open Your Site to AI Crawlers

The single most common reason businesses are invisible to LLMs is blocking AI crawlers in robots.txt. Many sites inherited blanket bot-blocking rules or added exclusions intentionally before LLM search became important. Check your robots.txt and explicitly allow the crawlers that matter:

User-agent: GPTBot

Allow: /

User-agent: ClaudeBot

Allow: /

User-agent: PerplexityBot

Allow: /

User-agent: Google-Extended

Allow: /

Beyond robots.txt, ensure your site does not serve CAPTCHAs, JavaScript walls, or aggressive rate limiting to bot user agents. LLM retrieval systems need to read your pages quickly. For the full list of signals to audit, the AI visibility checklist covers every technical, structural, and content factor that AI engines evaluate.

Implement the llms.txt Protocol

The llms.txt protocol is an emerging standard that gives LLMs a machine-readable summary of your website. It works like robots.txt but serves the opposite purpose instead of telling bots what not to crawl, it tells them what your site contains and where to find the most important content.

A well-structured llms.txt file placed at your domain root provides LLMs with a concise map of your business: what you do, what your key pages are, and how content is organised. This is especially valuable for retrieval systems, because it helps them identify which of your pages is most relevant to a given query without having to crawl the entire site. Not every LLM supports llms.txt yet, but adoption is growing. Implementing it now costs almost nothing and positions you ahead of competitors who have not adopted it.

Structure Site Architecture for AI Retrieval

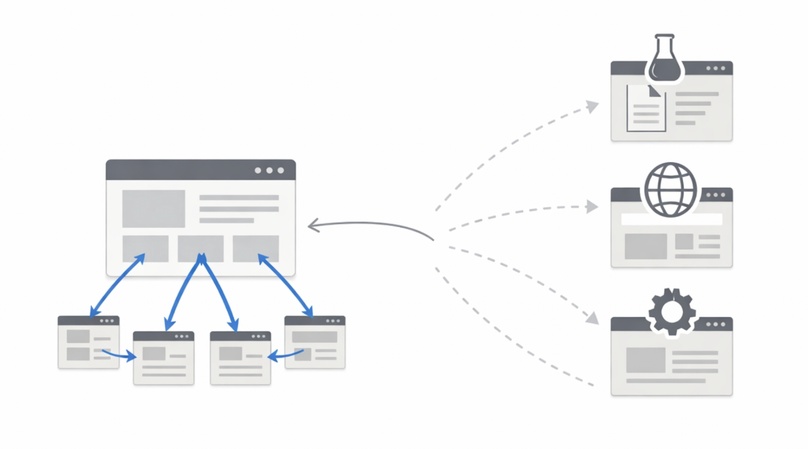

Traditional website architecture is designed for human navigation menus, breadcrumbs, visual hierarchy. LLM retrieval systems navigate differently. Three architectural patterns improve LLM discoverability:

- Hub-and-spoke content models. Organise content around central topic pages (hubs) that link to detailed subtopic pages (spokes). When an LLM retrieves a hub page, it sees the full scope of your expertise and can follow links to find specifics. This mirrors how LLMs evaluate topical authority they cite sources that demonstrate comprehensive coverage, not isolated pages.

- Clean URL structures. Use descriptive, hierarchical URLs that communicate content scope.

/blog/ai-search-optimizationtells a retrieval system more than/blog/post-12847. - Comprehensive XML sitemaps. Keep your sitemap updated with accurate

lastmoddates and logical organisation. Retrieval systems use sitemaps as a discovery mechanism.

Deploy Structured Data Across the Entire Site

Schema.org structured data (JSON-LD) is the most direct way to communicate with retrieval systems in a language they understand natively. Most websites implement structured data inconsistently a few pages have it, most do not. For LLM search, you need site-wide coverage.

- Organization schema on your homepage establishes your business as a recognisable entity. Include name, URL, logo, description, founding date, social profiles, and contact information.

- WebSite schema with a SearchAction tells LLMs how your site's search function works increasingly relevant as AI agents become capable of interacting with sites programmatically.

- Article and BlogPosting schemas on all editorial content provide publication dates, authors, and topic signals that LLMs use to assess freshness and credibility.

- FAQPage schema on Q&A pages maps directly to the conversational query patterns that drive LLM search.

Consistent structured data across your site builds a machine-readable knowledge layer that LLMs can parse instantly. For a deeper look at how citation engines use this data, see the AI Citation Playbook.

Make Every Page Self-Contained and Extractable

LLM retrieval does not read your site in order. It lands on individual pages, reads them, and decides whether to cite specific passages. This means every page must function as a standalone unit an LLM should understand what the page is about, who published it, and what it claims without visiting any other page on your site.

Practical requirements for LLM-extractable pages:

- State the topic and key conclusion in the first paragraph

- Use H2 headings that describe each section as a complete phrase

- Define terms where they first appear LLMs extract definitions and reuse them in responses

- Include specific data points, figures, and named entities rather than generic claims

- Attribute facts to sources LLMs are more likely to cite content that cites its own sources

The difference between a page that gets cited and one that gets ignored is often specificity. "We help businesses grow" tells an LLM nothing. "SwingIntel's AI Readiness Audit checks 19 factors across structured data, content clarity, and technical signals" gives the LLM a citable fact.

The 7 LLMO Strategies That Get AI to Recommend Your Brand

Foundations make you findable. The following seven strategies make you chosen. Seer Interactive's analysis of 3,119 informational queries found that brands cited in Google AI Overviews earn 35% more organic clicks and 91% more paid clicks than uncited competitors, and AI-driven visitors generally convert at stronger rates than standard organic traffic because the AI has already vetted the brand before the click.

1. Build Authority Across Trusted Sources

LLMs pull heavily from sources they trust Wikipedia, Reddit, LinkedIn, industry publications, and high-authority blogs. A brand that appears on a single website is invisible to most models. A brand mentioned consistently across multiple trusted sources becomes a named entity in AI knowledge.

Focus on earning mentions in places LLMs already cite. Guest posts on industry publications, mentions in relevant Reddit discussions, LinkedIn thought leadership, and inclusion in listicles and comparison articles all compound into distributed authority. Recent industry analyses consistently find that distributed brand mentions across trusted third-party sources now carry more weight for LLM citations than raw backlink counts.

2. Make Your Content Extractable

AI models do not read your website the way humans do. They extract discrete passages a single paragraph, a definition, a data point and weave those extracts into generated answers. If your content is not structured for extraction, the model will skip it.

Write self-contained sections under clear H2 headings. Lead each section with a direct, factual answer before expanding with context. Include specific numbers, dates, and claims that a model can extract verbatim. Our guide on optimising content for AI search covers the specific techniques that make content LLM-friendly.

3. Own Your Entity Definition

LLMs build internal knowledge graphs connecting brands to categories, attributes, and relationships. If your site does not clearly define what your brand is, what it does, and who it serves, the model will either guess incorrectly or ignore you entirely.

Implement JSON-LD structured data Organization, Product, Service, and FAQ schema at minimum. Use consistent naming across every platform. When your About page says "AI-powered marketing platform", your LinkedIn says the same, and your schema markup confirms it, LLMs build a strong, consistent entity association. Strong entity presence is one of the most reliable predictors of AI citations.

4. Get Into the Training Data

Models like GPT-4, Claude, and Gemini are trained on massive web crawls. If your site was not included in those crawls, the model has no baseline awareness of your brand it cannot recommend what it has never seen. Check your robots.txt to ensure AI crawlers are not blocked, and verify your presence in Common Crawl. SwingIntel's AI Readiness Audit measures your training data presence as one of its core scoring dimensions.

5. Refresh Content Quarterly

Content freshness directly affects whether LLMs cite you. Industry analyses of AI citation patterns consistently show that older content loses citation share over time as RAG-equipped models prioritise recently updated pages, especially for evolving topics.

Update your most important pages at least every 90 days. Add new data points, refresh statistics, and revise recommendations. This is not about rewriting articles it is about ensuring the factual claims AI models extract from your content remain current. Content decay is one of the most common reasons brands lose AI visibility over time.

6. Target the Queries AI Actually Answers

Not all queries trigger AI-generated answers equally. Questions that start with "best", "top", "compare", "vs", and "alternatives" consistently produce AI responses where brands are named. These are the queries where LLMO has the highest impact.

Create content specifically targeting these comparison and recommendation queries for your category. A page titled "Best CRM for Small Businesses" that defines your product's position clearly, includes structured comparisons, and presents factual differentiators gives LLMs exactly what they need to include you. Understanding how AI engines choose which brands to surface helps you target the right queries.

7. Monitor and Measure Your AI Presence

You cannot optimise what you do not measure. Manually querying ChatGPT, Perplexity, and Gemini for your brand is a starting point, but systematic citation testing across multiple providers gives you a measurable baseline and tracks progress over time.

Track three metrics: mention rate (how often AI names you in relevant queries), citation rate (how often your domain appears as a source), and sentiment (whether AI describes your brand positively). Industry benchmarks suggest aiming for a 60% mention rate across at least three major LLMs for your core category queries.

The LLM Seeding Framework: Publish, Distribute, Reinforce

The seven strategies above live mostly on your own domain. But LLMs build citation confidence through repetition across independent sources. The distribution layer what we call LLM seeding is what bridges the gap between a well-optimised website and a brand that actually gets named in AI answers.

LLM seeding is the practice of publishing and distributing content so that large language models can easily find, understand, and reference your brand when answering user questions. It is not about gaming an algorithm. It is about making your brand the most obvious, well-documented answer in the places AI models actually look.

Effective LLM seeding follows a three-part cycle. Each phase builds on the previous one, and skipping any of them weakens the entire strategy.

Publish Cite-Worthy Content

Not all content gets cited. AI models extract information from pages that make extraction easy and credible. The formats that consistently earn citations include:

- Comparison guides with structured tables ("Product A vs Product B")

- Original research with specific data points and named methodologies

- FAQ sections with 8-10 substantive, well-answered questions

- How-to guides with clear step-by-step instructions

- Expert roundups with attributed quotes and credentials

The key word is extractable. If a language model can pull a clean, self-contained answer from your content, it will. If your insights are buried in narrative paragraphs with no structure, they will not surface. This is why content structure matters for AI visibility AI models parse content in chunks, and each chunk needs to stand on its own as a complete, citable unit.

Distribute Across Trusted Sources

Publishing on your own site is necessary but not sufficient. When multiple trusted platforms mention your brand in the same context, models treat that as a stronger signal. High-value distribution channels for LLM seeding:

| Channel | Why LLMs Trust It | Action |

|---|---|---|

| Industry publications | Editorial oversight, domain authority | Guest posts, contributed articles, expert commentary |

| Review platforms (G2, Capterra, Trustpilot) | User-generated, structured data | Encourage detailed customer reviews |

| Reddit and community forums | High citation rate in ChatGPT specifically | Genuine participation, not promotion |

| YouTube | Transcripts are crawlable, high trust | Product walkthroughs, comparison videos |

| Professional context, rapid AI indexing | Consistent thought leadership posts | |

| Wikipedia (where eligible) | Highest trust signal for LLMs | Ensure your brand page is accurate and sourced |

The goal is not to spam every platform. It is to ensure your brand appears in the specific contexts where users ask questions your product answers.

Reinforce With Consistency

LLM seeding is not a campaign. It is a sustained practice. RAG systems query the web in real time, and training data is updated periodically. Brands that publish consistently maintain their presence. Brands that publish once and stop fade from AI responses.

Reinforcement means updating existing content when products, pricing, or positioning change; maintaining consistent messaging across all distribution channels; monitoring AI responses to catch when your brand appears or disappears; and republishing strategically to keep content fresh in retrieval indexes. A six-month-old comparison guide may lose its citation edge to a competitor's freshly published version.

Five Accelerator Tactics

The framework gives you the structure. These tactics give you speed.

Name your frameworks and methodologies. When you attach a proprietary name to your approach a scoring model, a methodology, a framework LLMs treat it as a distinct entity. Named concepts are easier for models to reference and harder for competitors to replicate. If your brand created the "X Framework", AI models will cite you when users ask about it.

Lead with original data. LLMs are hungry for specific numbers. "We analysed 100,000 websites" is citable. "Many websites struggle with visibility" is not. Original research even small-scale studies or internal data gives AI models something concrete to reference. The more specific and sourced the data point, the more likely it gets cited.

Build topical authority, not just pages. AI models do not evaluate individual pages in isolation. They assess whether a domain consistently covers a topic with depth and expertise. A single blog post about LLMO will not build citation confidence. Twenty interconnected posts covering every angle of AI search visibility will. This is why topical clusters matter more for AI visibility than they ever did for traditional SEO.

Optimise for the question, not the keyword. Traditional keyword targeting optimises for search volume. LLM seeding optimises for the actual questions users ask AI assistants longer, more conversational, more specific. Instead of targeting "AI visibility tools", create content that directly answers "What is the best tool to check if my brand appears in ChatGPT results?" Prompt research for AI SEO is the LLM equivalent of keyword research.

Get your brand on third-party lists. "Best X for Y" lists on third-party sites are citation magnets. When an industry publication ranks your product in a comparison, that structured format is exactly what LLMs extract and cite. Invest in PR, partnerships, and review cultivation that gets your brand onto these lists they carry more weight in AI responses than your own marketing pages.

How to Measure LLMO Success

Traditional analytics will not capture the full picture. LLMO success requires tracking metrics most businesses do not monitor yet:

- AI citation frequency how often your brand appears in responses from ChatGPT, Perplexity, Gemini, Claude, Google AI, Grok, DeepSeek, Microsoft Copilot, and Meta AI

- Citation context whether your brand is mentioned as a recommendation, a comparison, or just a passing reference

- Source diversity how many independent sources mention your brand in contexts relevant to your offering

- Response positioning where in the AI response your brand appears (first mention vs. last in a list)

- Retrieval coverage whether your key pages appear in AI-powered search results from platforms like Perplexity and Google AI Overview

- Training data presence whether your domain appears in the Common Crawl index that feeds most LLM training pipelines

- Sentiment accuracy whether LLMs describe your brand correctly, or get key facts wrong

Monitoring AI search visibility is essential because the gap between "invisible" and "first recommendation" can shift in weeks, not months. Brands that track their AI presence can respond to drops before they become entrenched. SwingIntel's AI Readiness Audit tests your brand across nine major AI platforms with thousands of targeted AI queries to measure exactly how and whether AI search engines cite your brand today.

LLMO vs. Traditional SEO: Complement, Not Replacement

LLMO does not replace SEO. It extends it. The foundation of strong SEO quality content, technical health, authoritative backlinks still matters because RAG-based AI systems query search engines in real time. Good SEO feeds good AI visibility.

But the reverse is not automatic. You can rank #1 on Google and still be invisible in ChatGPT. The distribution layer, the content structure, and the third-party validation that LLMO adds are what bridge that gap.

| Traditional SEO | LLMO | |

|---|---|---|

| Goal | Rank pages in search results | Get brand mentioned in AI answers |

| Primary channel | Your website | Multiple trusted platforms |

| Success metric | Rankings, organic traffic | AI citations, brand mentions |

| Content format | Long-form, keyword-optimised | Structured, extractable, question-aligned |

| Timeline | 6-12 months for results | 3-8 months for initial citations |

| Maintenance | Periodic updates | Continuous publishing and distribution |

What is changing is the distribution of discovery. Gartner's 25% projection is the headline number, but the underlying shift is more interesting: users who get answers from AI assistants are pre-qualified by the time they click, which is why AI-driven traffic tends to convert at stronger rates than standard organic. Businesses that optimise for both channels will capture traffic from both. Those that ignore LLMO risk becoming invisible to a fast-growing segment of potential customers.

The good news is that most LLMO best practices also improve your traditional SEO. Clear structure, factual density, fast load times, and proper schema markup help you rank on Google and get cited by AI models. The effort compounds.

The Compounding Advantage

LLMO is not a one-time project. Every mention builds your brand's entity strength in AI knowledge, making future mentions more likely. Every citation reinforces your authority, improving your chances of being cited again. The brands that start LLMO early create a compounding advantage that becomes progressively harder for competitors to overcome.

Initial AI citations typically appear within 3-8 months of sustained effort faster than the 6-12 months traditional SEO takes to produce meaningful rankings. But the window is closing. The longer AI search matures, the more entrenched the current leaders become, and the harder it gets to displace them.

The practical starting point is knowing where you stand. A free AI readiness scan takes 30 seconds and shows you how AI search engines currently see your website. For the full picture including live citation testing across ChatGPT, Perplexity, Gemini, Claude, Google AI, Grok, DeepSeek, Microsoft Copilot, and Meta AI the AI Readiness Audit covers every dimension of AI visibility with specific, actionable recommendations for what to publish, where to distribute, and how to start earning the citations your competitors are already getting.

Frequently Asked Questions

What is LLMO (LLM Optimization)?

LLMO is the discipline of structuring your brand's online presence so that large language models ChatGPT, Perplexity, Gemini, Claude, and others mention, recommend, and cite your brand when answering user questions. Unlike traditional SEO which focuses on ranking pages in search results, LLMO focuses on becoming the answer AI models generate.

How is LLMO different from traditional SEO?

Traditional SEO optimises for ranking signals (backlinks, keyword relevance, page speed) to appear in a list of search results. LLMO optimises for AI comprehension signals (entity consistency, extractable content, third-party validation, cross-source authority) to be cited in AI-generated answers. Backlinks alone will not get you cited by AI you need content structured for extraction and a presence across the trusted sources that LLMs pull from. Only about 12% of AI citations come from URLs ranking in Google's top 10, and the majority don't appear in Google's top 100 at all, which is why strong Google rankings do not automatically translate to AI visibility.

Is LLMO the same as GEO or LLM seeding?

LLMO, GEO (Generative Engine Optimization), and LLM seeding all describe overlapping goals making your brand visible to AI systems that generate answers. LLMO emphasises optimisation for large language models specifically. GEO is a broader term covering all generative AI engines. LLM seeding describes the distribution layer publishing and distributing content across trusted third-party sources so LLMs encounter your brand in multiple places. In practice, the strategies and techniques overlap almost entirely.

What is the fastest way to improve my LLMO?

Start by allowing AI crawlers in your robots.txt (GPTBot, ClaudeBot, PerplexityBot, Google-Extended). Then restructure your highest-value pages so each section leads with a direct, factual answer under a descriptive H2 heading. Add JSON-LD structured data (Organization, Article, FAQPage) and front-load key insights in the first 200 words of each section. These changes have the highest impact-to-effort ratio and typically produce measurable citation lift within weeks.

What citation rate should brands aim for in AI search?

Industry benchmarks suggest aiming for a 60% mention rate across at least three major LLMs for your core category queries. Track three metrics: mention rate (how often AI names you), citation rate (how often your domain appears as a source), and sentiment (whether the AI describes your brand positively). These require systematic testing rather than manual spot-checking citation testing tools query multiple AI platforms to give you a repeatable baseline.

How often should content be updated for LLMO?

Update your most important pages at least every 90 days. Research shows that content older than three months sees citation rates drop sharply as RAG-equipped models prioritise recently updated pages. The goal is not rewriting articles it is ensuring factual claims, statistics, and recommendations remain current.

How long does it take for LLMO to produce results?

Initial AI citations typically appear within 3-8 months of sustained effort, compared to 6-12 months for traditional SEO. Real-time retrieval improvements from structural and content changes can take effect within days as AI systems re-crawl your pages. Training data updates happen with model retraining cycles, which can take weeks to months. LLMO is not a one-time campaign it requires continuous publishing and distribution to maintain citation presence.

Do I need to choose between SEO and LLMO?

No. LLMO complements SEO rather than replacing it. Traditional search engines still drive the majority of web traffic, and ranking signals like page speed, mobile usability, and authoritative backlinks also improve content quality for AI models. Most LLMO best practices clear structure, factual density, and schema markup directly benefit your Google rankings as well. The brands that win are the ones running both strategies simultaneously.

The question is no longer whether AI will change how customers find your brand. It already has. The question is whether you are the brand AI recommends or the one it leaves out.

Check your brand's AI visibility for free see your AI Readiness Score in 30 seconds, or explore the full AI Readiness Audit for live citation testing across nine AI platforms.